Before a BPO contract is signed, one question is rarely given a clear answer: what does month one look like?

Not the pitch deck. Not the case study. The real steps. Who does what, when, and with what proof. From signing to a live campaign that sends daily reports.

According to the 2025 Deloitte Global Business Services Survey, 80% of leaders plan to keep or grow their outsourcing spend. The global BPO market was valued at $358.58 billion in 2026, up from $328.4 billion in 2025. It is set to reach $695.77 billion by 2033 at a 9.9% CAGR (Grand View Research, 2026). Outsourcing is not slowing down. But the bar for vendors in the first 90 days has never been higher.

Research shows the top reason BPO deals fail is not cost or skill. It is unclear what the expectations are during onboarding (Bridgeforce, 2025). The onboarding window is where trust is built on a clear, written plan, or lost to vague timelines and missing proof.

A managed BPO deal follows a set process. It has defined phases, written deliverables at the end of each phase, and performance gates. This guide covers what happens in each of the first 90 days. It shows who owns each step, what proof is delivered, and what to ask any vendor before signing.

Why the First 90 Days Define the Entire Engagement

The first 90 days set every pattern for the rest of the deal. Reporting cadence. Communication norms. The accountability structure. The client’s trust in the data. All of it is locked in within three months.

A 2025 report from the International Contact Management Institute (ICMI) confirmed this. The first 90 days of a BPO contract are the most critical period. They set both the rules and the tone for the long-term partnership. Deals that lack clear milestones in this window are far more likely to end in disputes, cost overruns, and vendor switches.

A strong 90-day onboarding produces three results:

- A live campaign with clean data. By day 90, enough data has been gathered: contact rate by lead source, qualification rate by agent, and transfer quality trends. Without a clean baseline, any fix is a guess. Day 90 is when guessing stops.

- A tested working relationship. Both sides know how the deal works day to day, what the client expects from reports, and how problems are raised. What the review schedule looks like. Getting this right in 90 days prevents the clash that shows up at month four, when expectations and actual operations mismatch.

- A performance story for leadership. Ops leaders need to show their CFO and CEO that outsourcing is working. Day 90 is the first real review. A strong first 90 days creates a clear story. A weak one creates three months of excuses.

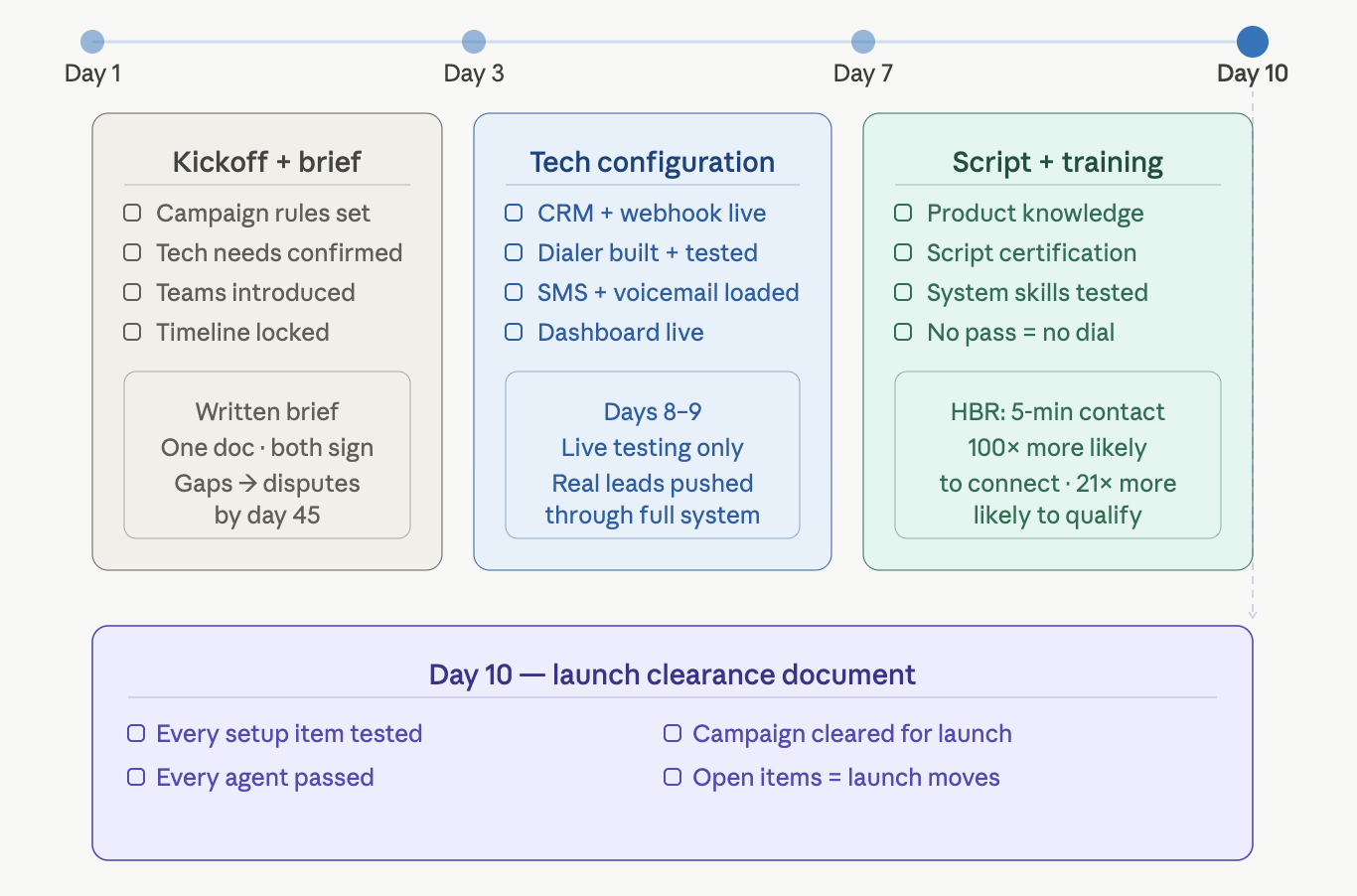

Days 1–10: Configuration, Integration, and Infrastructure

The first 10 days are setup days. No live dials are made. No performance data is created. The work done here determines whether everything after launch runs smoothly or requires costly fixes.

Days 1–3: Contract Execution and Kickoff

The deal starts with a working kickoff session. Not a welcome call. A real working meeting. These items are covered:

- Campaign rules are set: target market, geography, who qualifies, daily volume caps, calling hours, and lead sources.

- Tech needs are confirmed: CRM access is set up, the dialer is picked, and API specs are reviewed.

- Teams are introduced: the client’s main contact, the operator’s campaign manager, supervisor, and QA lead meet with set communication rules.

- The timeline is locked in: each milestone gets a named owner and a due date, agreed by both sides.

A written campaign brief is produced. One document. Every decision from the session. Both sides sign off before tech work starts. Any gap left open at this point tends to turn into a dispute by day 45.

Days 3–7: Technical Configuration

The operator’s tech team builds the platform:

- CRM link is set up: a webhook or API connects the lead source to the CRM. It is tested with a real lead to confirm that records are created correctly.

- Dialer setup is done: campaign rules, calling hours by timezone, disposition codes, routing, and transfer lines are built and tested.

- SMS and voicemail tools are loaded: auto-SMS triggers, ringless voicemail files, and follow-up sequences go into the system

- The reporting dashboard is built: client login credentials are sent, and the dashboard is checked.

By day 7, the tech stack is ready. Days 8 and 9 are used for live testing. Not in a sandbox. Real leads are pushed through the full system.

Days 8–10: Script Finalization and Agent Training

Script work runs concurrently with tech setup. A draft script is sent by day 5. It is built from the campaign brief, the qualifying rules, and the operator’s industry knowledge. The client reviews by day 7. The final script is sent on day 8.

Agent training starts on day 8 and wraps by day 10:

- Product knowledge is covered: the client leads a session on the product, the target buyer, and the market.

- Script certification is run: the operator leads sessions on script delivery, objection handling, compliance language, and transfer steps.

- System skills are tested: each agent shows they can use the dialer, log dispositions in the CRM, and run a transfer.

No agent dials without passing. This is not a preference. It is a contract rule.

Research from Harvard Business Review shows that firms that reach leads within 5 minutes are 100 times more likely to connect. They are also 21 times more likely to qualify the lead than firms that wait 30 minutes. That speed-to-lead standard is baked into the systems built during this window.

Deliverable at Day 10: Written proof from the operator that every setup item has been tested, every agent has passed, and the campaign is cleared for launch. If anything is still open, the launch date moves. No open items go live.

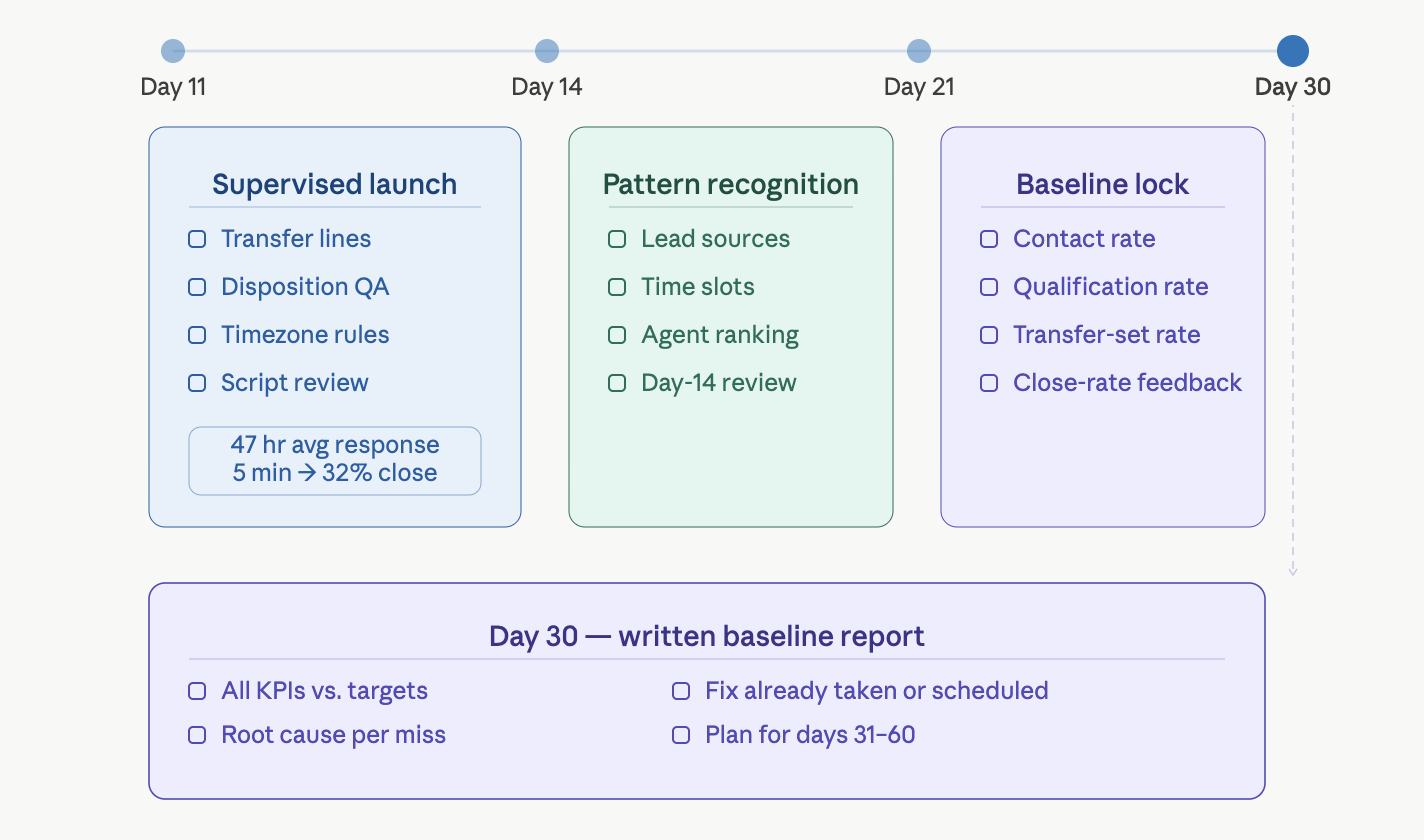

Days 11–30: Live Launch, Calibration, and Baseline Establishment

Days 11 through 30 are calibration days. The campaign is live. Data is coming in. But almost nothing from this window should be read as final. This is baseline data. The next phase will build on it.

Days 11–14: Supervised Launch

The first four days of live calls run under closer watch than normal. The campaign manager tracks results in real time, not just end-of-day reports. The supervisor is on the floor during all calling hours.

Key items watched during this window:

- Transfer lines: every transfer is checked to confirm the call connects, and the closer gets the right briefing

- Disposition accuracy: the QA team spot-checks dispositions live to catch errors before they turn into weeks of bad data

- Calling window rules: timezone enforcement is matched against real dial timestamps for every region

- Script compliance: QA reviews 25–30% of calls from the first three days to catch drift before it becomes habit

A 2026 Optifai study found the average B2B lead response time across 939 firms is 47 hours. Only 23% respond within five minutes. Leads reached in under 5 minutes had a 32% close rate, 2.6 times higher than those reached after 24 hours (12%). The supervised launch ensures the operator’s speed-to-lead system meets or exceeds these marks from day one.

Days 14–21: Performance Pattern Recognition

By day 14, enough data exists to spot patterns. Which lead sources have the best contact rates? Which time slots work best? Which agents are above or below average? Which disposition codes are used incorrectly?

The day-14 review is the first real data talk between the client and the operator. It is not a crisis call. It is a calibration meeting. Every new campaign has surprises in week two. The review logs them and builds an action plan with owners and deadlines.

Days 21–30: Baseline Establishment

By day 30, enough data has been gathered to set a real baseline against target KPIs:

- Contact rate by lead source

- Qualification rate on contacted leads

- Transfer-set rate on qualified contacts

- Show rate on transferred prospects

- Early close rate feedback from the closer team

The day-30 review is the first formal check. Real numbers are compared to the targets in the campaign brief. For every metric below target, the operator gives a clear root cause and a specific fix. Not a plan to look into it. An action already taken or scheduled.

Deliverable at Day 30: A written report on the full 30-day baseline: all KPIs against targets, root cause for any misses, and the fixes planned for days 31–60.

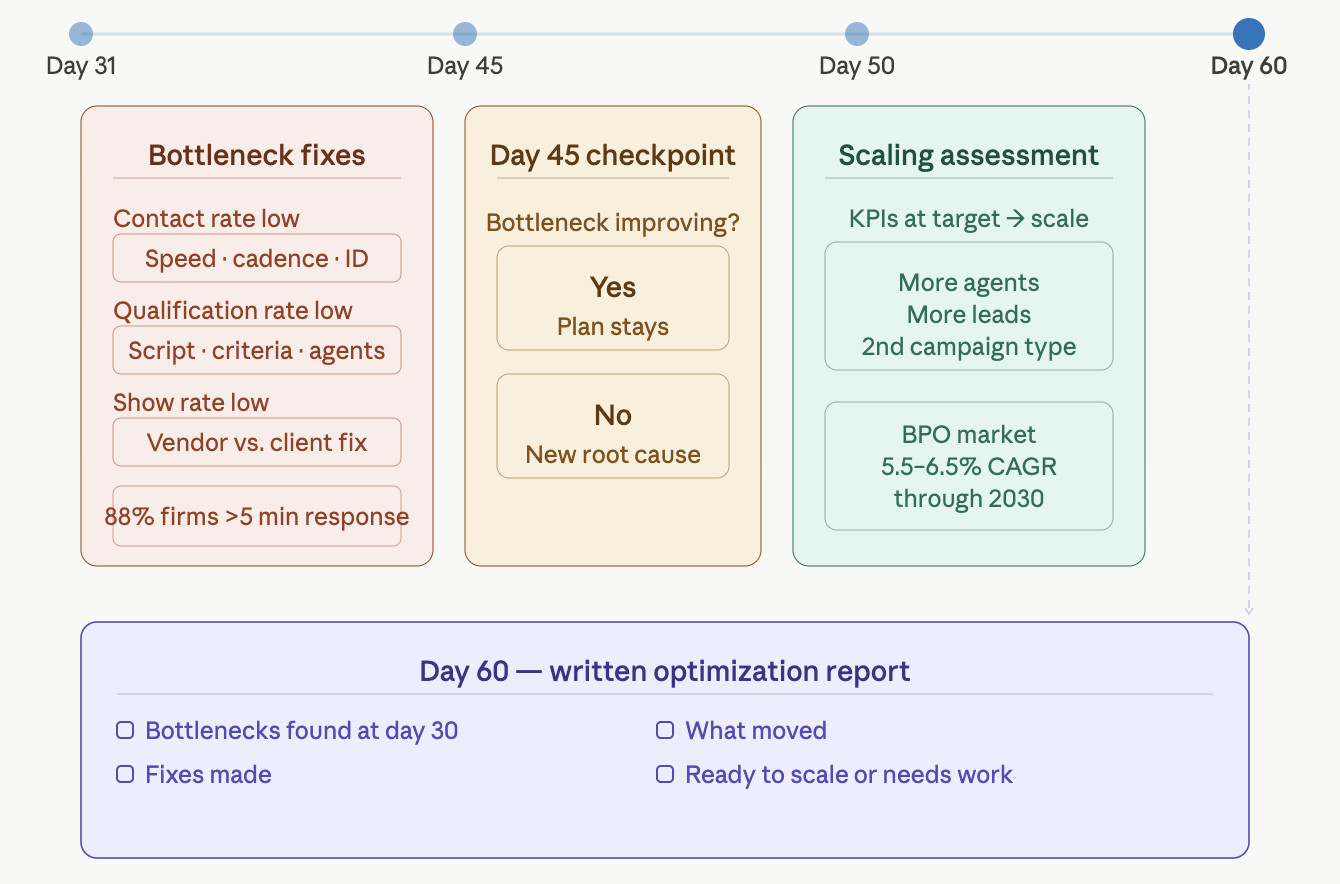

Days 31–60: Optimization and Scaling

Days 31 through 60 are optimization days. The baseline is set. Calibration is done. The campaign now runs against clear targets based on the previous month’s results.

Optimization Priorities From the 30-Day Baseline

Every campaign, in the first 30 days, has one main bottleneck. One metric that, if fixed, creates the biggest drop in cost per lead. The days 31–60 plan targets one fix, not small tweaks to every number at once.

Common bottlenecks and their day-31 fixes:

- Contact rate below target: The focus shifts to speed-to-lead timing, cadence depth, and caller ID reputation. Dialer settings, SMS timing, or calling windows are adjusted based on hour-by-hour contact rate data. A 2026 Apten report found 88% of HVAC and home services firms take more than five minutes to respond. That gap is closed by the automation built in days 1–10.

- Qualification rate below target: The focus shifts to script compliance, criteria use, and agent-level gaps. Flagged calls from the first 30 days are reviewed in a team session. Scripts are updated, approved, and pushed back out.

- Show rate below target: The cause is split into vendor-side issues (transfer quality, prospect engagement at handoff) and client-side issues (closer availability during transfer hours). Each gets a different fix.

Day 45 Review: Mid-Optimization Checkpoint

A check at day 45 tests whether the day-31 fixes are working. If the main bottleneck is improving, the plan stays. If the number has not moved, the root cause is revisited, and a new plan is made.

Days 50–60: Volume Scaling Assessment

If KPIs are at or above target by day 50, the day-60 review adds a scaling plan: more agents, more leads, or a second campaign type. Scaling before results are proven wastes capacity. Scaling after they are proven compounds.

The Everest Group’s 2025 BPS Top 50 report noted the BPO market is growing at a 5.5–6.5% CAGR through 2030. The most successful deals scale step by step after a proven baseline, not by rushing to volume before controls are tested.

Deliverable at Day 60: A written optimization report: which bottlenecks were found at day 30, what fixes were made, what moved, and whether the campaign is ready to scale or still needs work.

Days 61–90: Steady State and Relationship Calibration

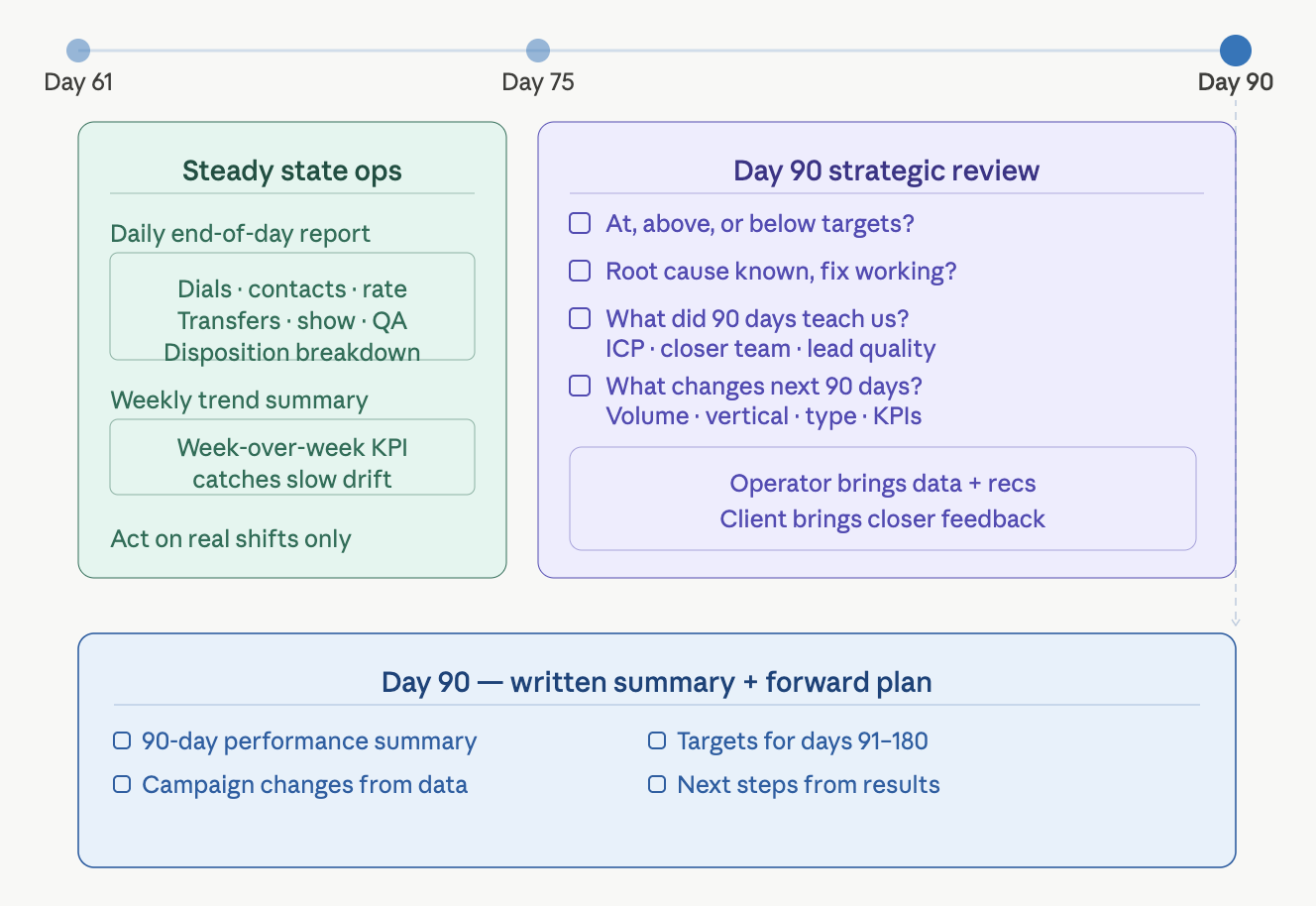

Days 61 through 90 mark the shift from new campaign to managed operation. The setup is stable. The baseline is set. The main bottleneck has been fixed. The campaign now runs in steady state. Management moves from fixing problems to tracking performance.

What Steady State Looks Like Operationally

Daily reports shift to the standard end-of-day format: dials, contacts, contact rate, transfers set, show rate, QA scores, and disposition breakdown. The report is checked each morning. Action is taken only on real shifts, not every data point.

The weekly trend summary becomes the main rhythm for reviews. Week-over-week KPI comparisons catch slow drift: a gradual decline that does not show up in a daily report but adds up over four to six weeks into a big gap from the target.

The Day-90 Strategic Review

A 2025 Bridgeforce analysis of BPO risk found that deals without formal review milestones are far more likely to see misalignment, scope creep, and vendor replacement. The day-90 review is built to prevent all three.

Every 90-day review covers four questions:

- Is the campaign at, above, or below the targets in the campaign brief?

- If the target is below on any metric, is the root cause known, and is the fix working?

- What has been learned about the ICP, the closer team, and lead quality from 90 days of data that was not known at launch?

- What should change in the next 90 days: volume, vertical, campaign type, or target KPIs?

The day-90 review is not a renewal talk. It is a planning session. The operator brings data and recommendations. The client brings feedback on what the closer team sees and what the business needs next quarter.

Deliverable at Day 90: A written 90-day summary and a forward plan: targets for days 91–180, any campaign changes based on data, and the next steps based on results.

What to Ask a BPO Vendor Before Signing to Verify This Timeline Is Real

The 30/60/90 day plan above is a standard, not a promise. Any vendor can describe a structured onboarding. These questions are built to tell apart vendors who have done this from those who hope to.

Question 1: Can a written launch plan with milestones and named owners be sent before the contract is signed?

The right answer is yes, sent with the proposal or within 24 hours. The wrong answer: “A plan will be made after signing.”

Question 2: What is tested before the first live dial?

The right answer is a clear list: transfer line testing, timezone checks, disposition routing, and agent certification. The wrong answer: “A thorough review is done before launch.”

Question 3: What happens if the campaign misses its day-30 KPI targets?

The right answer names specific fixes, a timeline, and a client escalation path if results do not improve. The wrong answer: “We will work together to figure out what happened.”

Question 4: What does the day-14 review cover, and who attends?

The right answer: the campaign manager, supervisor, and QA lead present two weeks of data against targets, with root causes and fixes. The wrong answer: “A check-in call will be scheduled.”

Question 5: What percentage of campaigns launch on schedule within 30 days?

The right answer is a real number with an honest note on delays. The wrong answer: “We always launch on time.”

Red Flags That Signal a Poor Onboarding Before It Starts

Five patterns in the pre-contract talk are strong signs of poor onboarding:

- No written campaign brief. A vendor who launches without a signed brief has no written agreement on what the campaign should produce. There is no standard for what counts as failure in month one.

- Vague launch timelines. “Two to three weeks” is not a timeline. A real plan has dates, milestones, and named owners. Vague timelines mean there is no set process.

- No agent certification rule. A vendor who skips certification before live dials puts the client at risk of script failures from day one. Certification is the gate. It decides if agents are ready.

- No day-30 review commitment. A vendor who will not commit to a formal review at day 30 has no accountability in month one. Without it, poor results can persist for months before enough data is available to push back.

- Resistance to written targets. A vendor who will not put specific contact rate, transfer-set rate, or show rate targets in the contract does not want to own outcomes. Operators own outcomes. Vendors’ own activities. That gap tells you which model you are looking at.

Frequently Asked Questions

How long does BPO onboarding take?

What should be expected from a BPO vendor in the first 30 days?

How can it be determined if a BPO onboarding is going well?

What is a 30/60/90 day plan in BPO?

What is the difference between BPO onboarding and BPO implementation?

What happens after the 90-day BPO onboarding period?

Build the First 90 Days Before You Sign

The gap between a BPO deal that works and one that fails is almost always set in the first 90 days. The infrastructure, the data baseline, the relationship, and the accountability: all built in this window.

A free contact rate audit is the first step. A written 90-day launch plan comes with every proposal: milestones, targets, and what to expect in the dashboard on day 30. Request an audit →